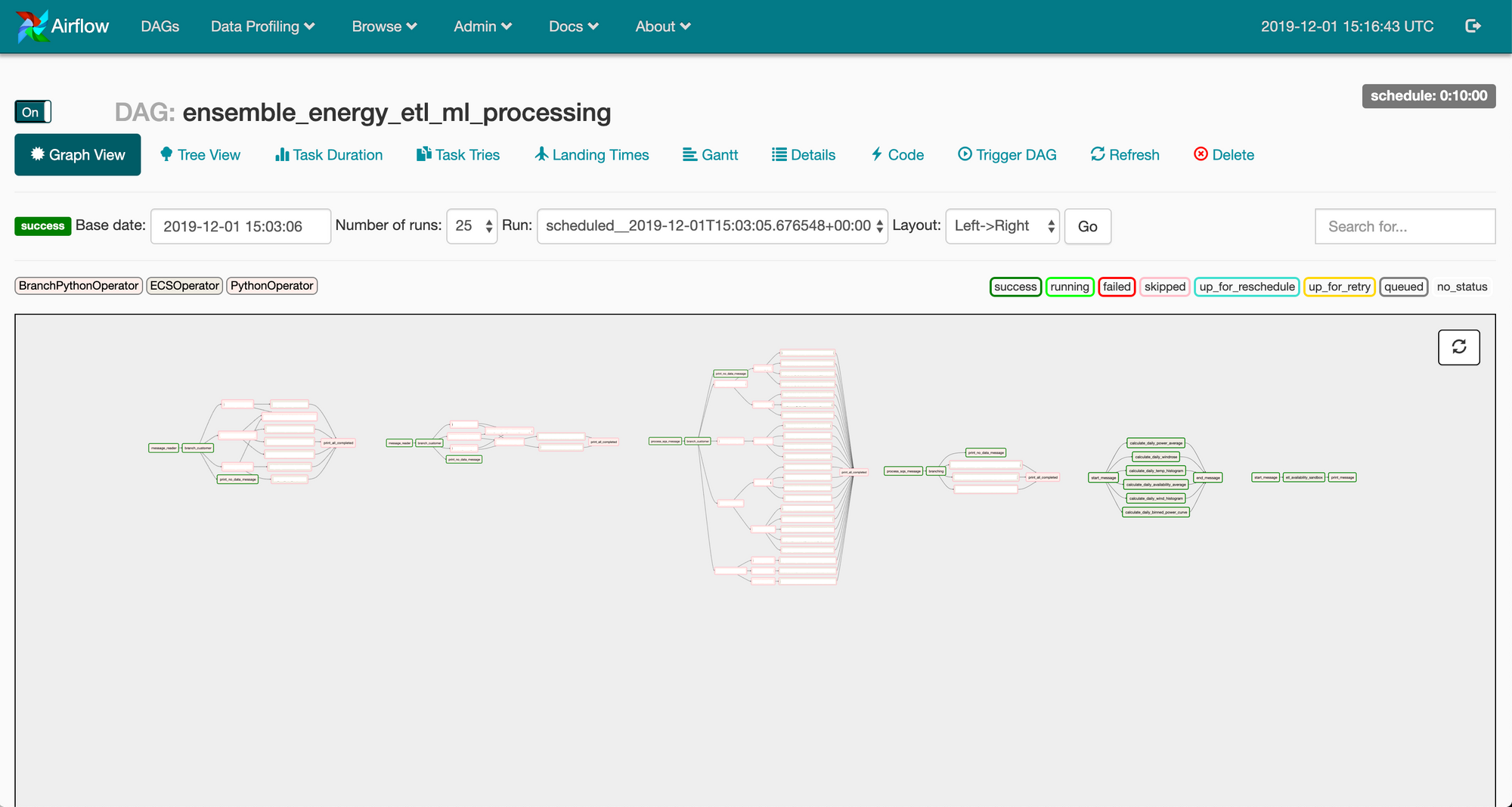

How do we retry the job without manually running it each time something fails? Scenario four : Due to some issues in network connection, a job fails. Scenario three: Reprocessing the data for the last Y days and parallel processing Z tasks. Scenario two: A certain job X that runs every hour, calculates some parameters and produces some results, or collects some data How do we pass those parameters or results to the next job? Scenario one : To create upstream & downstream jobs i.e triggering a job after a particular job finishes, we need a dependency plugin. To clarify my point I will describe certain scenarios we encountered during our work, which made us rethink our work methods. But this was just making things harder to manage. By default, Jenkins does not provide any workflow management capabilities, and so we had to add plugins on top of it to manage our workflows. Jenkins is an automation server used for continuous-integration and continuous-deployment (CI/CD). Airflow gives us the ability to manage all our jobs from one place, review the execution status of each job, and make better use of our resources through Airflow’s inbuilt parallel processing capabilities.Įarlier we were using Jenkins to build our ETL pipelines. Since we deal with Big Data, faster and easily manageable workflow management systems are needed to efficiently deal with all our scheduled ETL.Īdapting to Airflow, has helped us in efficiently building, scaling, and maintaining our ETL pipelines with reduced efforts in infrastructure deployments and maintenance. This creates a necessity to reprocess the previous 2 days of data every hour so that we have updated data. Kayzen’s state of the art prediction models are trained on real time data, which also creates a need to reprocess delayed data, an example of delayed data would be billing of impressions, which can take place up to 48 hours after bidding. ETL refers to the group of processes that includes data extraction, transformation, and loading from one place to another which is often necessary to enable deeper analytics and business intelligence.

We have been able to’ increase the efficiency of some of our ETLs by more than 50 percent with ease.” – Servesh Jain, Co-Founder & CTO pipelines are one of the most commonly used day-to-day process workflows in a majority of IT companies today. “After adapting to Airflow, one of our many achievements at Kayzen has been scheduling a large number of parallel jobs without the need to tackle deadlocks or complicated code blocks. Airflow is also being widely adopted by many companies including Slack and Google (Google Cloud Composer has chosen Airflow as its default workflow management system). Apache-Airflow is an open-source software created by Airbnb and has been developed for building, monitoring, and managing workflows.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed